Then you will be able to measure the impact of each individual component.

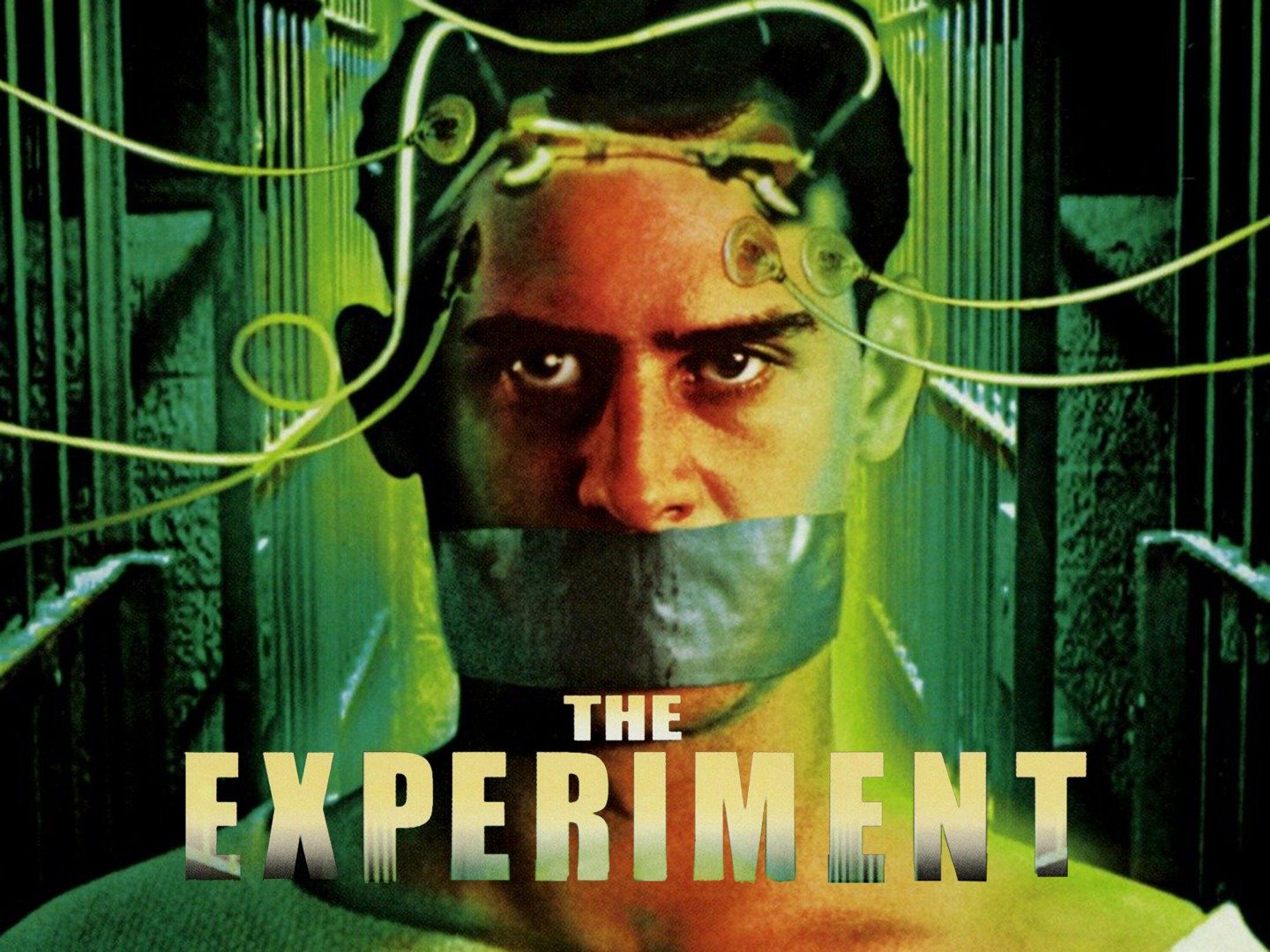

#The experiment 2010 true story series#

It should also be simple: If you are making a complex change, break it down into a series of simple changes – each with its own hypothesis. The hypothesis should capture the expected effect of the change introduced by the treatment. The first step in designing an A/B experiment is to have a clear hypothesis. Illustration c/o Ilda Ladeira Forming a Hypothesis and Selecting Users Formulate your Hypothesis and Success Metrics In the first part of the series, we focus on the pre-experiment stage and start with the most basic step in A/B testing: formulating the hypothesis of the A/B test. We divide these patterns into three major categories to track the lifecycle of an A/B experiment: the pre-experiment stage, the experiment monitoring and analysis stage, and the post-experiment stage. Consequently, feature teams can confidently make a ship/no-ship decisions based on the observed results and learn from them for future development. These patterns help automatically detect or prevent issues that would affect the trustworthiness or generalization of experiment results. In this series of blogposts, we aggregate and describe a comprehensive list of experiment design and analysis patterns used within Microsoft to increase confidence in the results of A/B testing. īut how can we ensure such trustworthy A/B testing end-to-end? There have been a lot of tools and techniques developed to improve trustworthiness in A/B testing, covering various practical aspects from broad overview of experimentation systems and challenges –, to having a right overall evaluation criteria –, pitfalls in interpretation of experiment results and designing metrics.

Therefore, ensuring trustworthy experiments remains a top challenge across the industry. If experiment results cannot be trusted, engineering and analysis efforts will be wasted and organizations will lose - you guessed it - trust in experimentation. One common need that each of our partner teams demands: trustworthiness. In the 14+ years of research since we launched our internal Experimentation Platform, we have enabled and scaled A/B testing for multiple Microsoft products, each with their own engineering and analysis challenges.

Ignoring data quality issues or biases introduced through design and interpretations can lead to incorrect conclusions that could hurt your product. Trustworthy data and analyses are key to making sound business decisions, particularly when it comes to A/B testing.